MW&A continues to outperform Federal and UN IPCC climate projections

Since 2014, MW&A has developed an array of products which now overlap with, and outperform Federal and UN IPCC climate forecasts. These include:

drought and pluvial forecasts

temperature forecasts

forecasts for the PDO, an ocean index impacting climate around the globe

forecasts for the AMO, an ocean index impacting climate around the globe

Our forecasts for the PDO and the AMO pose an alternative to the recent National Academy of Sciences (NAS) publication “Frontiers in Decadal Climate Variability”. That workshop concludes that those ocean indexes cannot be forecast without significant additional funding and study for many years into the future.

The apparent contradiction may be because federally sponsored climate forecasting efforts are primarily vested in global circulation model (GCM) exercises as their foundation. GCMs are impressive computational systems, yet after decades of development and growth, they remain costly [1] and opaque [2], and their forecasts continue to fall short of the promised potential.

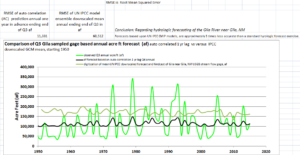

For example, this figure below is adapted from a downscaled set of UN IPCC GCM runs (CMIP series) applied towards history matching of flows of the Gila River in southern New Mexico [3]. The cost and the accuracy of the model simulations have not been published. I attempt here to at least determine the study’s accuracy.

In contrast to the accepted practices for GCMs, most other industry standard applied modeling studies include a time series of the observation data. This allows for easy visual comparison of the model’s skill. Since that is missing in this case, I have reproduced the study’s primary model result in olive green and then overlaid the actual observation data in bright green.

To develop further some of the missing accuracy performance information, I calculated the root mean squared error (RMSE) of the study’s projections by comparing the results to the actual river record. I then conducted a standard auto regression hydrology exercise and compared that output (the bold black curve) to the actual river record as well. The figure documents that the standard conventional exercise was 500% more accurate than the GCM – based hindcast.

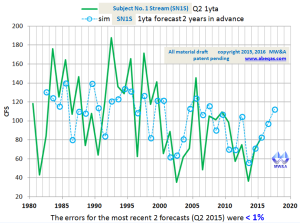

Although the conventional approach was more accurate, it still conspicuously fails to capture highs and lows of the time series. Notably MWA’s methods often produce forecasts/hindcasts which are several times more accurate than that conventional method. A recent post and the following image detail our results for a similar sized stream relatively nearby and shows much greater fidelity across the range of highs and lows.

Comparison of this image to the previous, suggests the significant benefits which will accrue from better forecasting. For example, in this case, forecasts are made 2 years in advance (blue circles), and they still for the most part anticipate the swings in moisture from year to year. There appear to be no other hydrologic forecasting systems in place today which can document this level of forecast accuracy.

References

[1] Among the costs are the deployment of supercomputing server-farms, some of which may utilize more electrical power than a medium sized town. For example, this link from New York Times describes exascale supercomputing initiatives for GCMs. It appears that near-future GCM supercomputing farms will require the same amount of electrical energy as small cities need, solely in order to produce climate forecasts.

Other costs include large numbers of specialists, engineers, scientists, and support staff. Years are required in some cases to set up computer runs, execute them, and process, interpret, and publish the results. Additional and significant costs derive from further downscaling of those global results to attempt to forecast for regional and local conditions. See the example of the Gila River profiled in this post.

[2] Opacity is the reason that most accept that the GCM models have been accurate. As the example in this post shows, accuracy can be worse than the accuracy of long favored standard techniques. Apparent accuracies of GCM models are also spurious, as a result of the practice of reinitialization which is described briefly in this reference:

Fricker, T.E., C.A.T. Ferro, and D.B. Stephenson, 2013, Three recommendations for evaluating climate predictions METEOROLOGICAL APPLICATIONS 20: 246 – 255 DOI: 10.1002/met.1409

Because the reinitialization practice hides the poor model skill, the forecast exercises are opaque (nontransparent).

[3] Gutzler, D.S. 2013, “Streamflow Projections for the upper Gila River”, for the New Mexico Interstate Stream Commission, UNM Contract No. 37676

3714total visits,2visits today

3714total visits,2visits today